AI-Powered shift left anomaly detection, Before code review and code commits.

Brings quality by going beyond code analysis. LOCI’s model understands bin, .pyc, js, and mini files.

Our Partners

LOCI`s features advances AI Augmented SW Development

Anomaly Detection

Predict performance degradation before code commits, code review.

Test Strategy

Advices on test strategy for deviated symbol behavoir.

Spec in the loop, Test coverage to Requirement.

Test Prioritization

Enables shift-left testing and reduces cloud resource usage.

Code Cleanins

Scans for similar segments, dead and cloned code, enhancing performance, Strong relevance to Code review, Techical debt management and DevOps.

Benefits of LOCI’s AI Shift-Left Testing approach

Early defect detection

- Identifies issues when they’re easiest and cheapest to fix.

Faster time to market

- Reduces overall development cycle by catching issues early.

Improved Quality

- Continuous testing leads to higher overall software quality.

Cost Reduction

- Some text Lowers the cost of fixing defects found late in development, reducing cloud resources and Engineering time

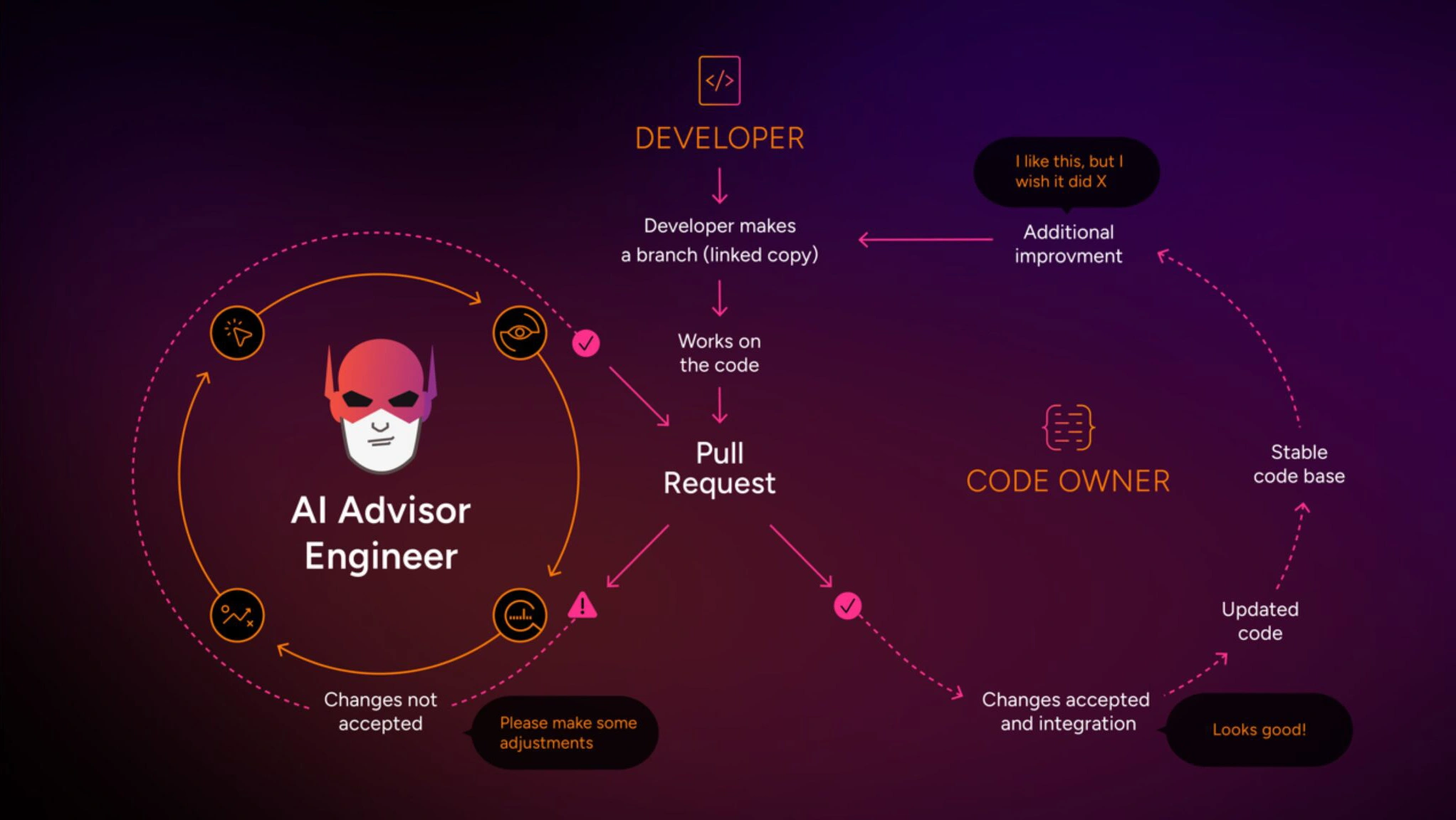

AI Advisor Engineer for Pull Request

Equipped with Aurora Labs’ Large Code Language Model for BINs and SW artifacts, LOCI analyzes changes in pull requests and their behavioral impact.

LOCI identifies software anomalies, advises test strategy, suggests priority tests to run, and offers guidance on refactoring based on coding style.

“My proprietary LCLM, optimized for BIN files and SW language, outperforms regular LLMs, making it an essential tool for modern software development.”…LOCI June 2024

Plan my test strategy

- LOCI helps you identify the most critical tests and symbols to focus on to plan your test strategy. This involves:

- Identifying Top Tests: Listing the top priority tests from your test Suite, sorted by their rank indicates their importance.

- Identifying Vulnerable Symbols: Listing the top highest vulnerable symbols sorted by their rank, which indicates their importance.

- By running the most important tests and writing tests for the most vulnerable symbols, you can ensure that your code is well-tested and potential issues are mitigated Faster Than Ever Before.

Show me where to Optimize

- LOCI shows you where to optimize by identifying your codebase’s most significant duplicate code segments. These segments can be refactored to reduce redundancy and improve code maintainability.

Let’s mitigate risk

- LOCI suggests mitigating risks by identifying and addressing anomalies and vulnerable symbols in your codebase. Vulnerable symbols are untested by current scenarios, making them crucial to identify and address to prevent potential issues.

LOCI For Refactoring

LOCI Reduces 20-30% engineering time per sprint!

LOCI delivers AI-powered code refactoring that adapts to your project, ensuring smarter, safer, and more efficient code changes. We tuned the model to be noncreative and stick to the SW language rules, and it adapts to your or the Organization’s coding style.

Scan

- Scan for Redundant, Cloned, and Similar segments

- Filtering per module, repos, and Segments

Refactoring

- Refactoring creativity monitored and ‘relaxed’

- Optimized for Embedded Systems

- Cross-repos and Projects with a revert mechanism

Fix

- Applying your own fix will update all similar codes at once.

- Apply changes, with one click to all segments

- Address all warnings for every segment with a single click, in accordance with warnings from third-party tools.

Start Your Journey with LOCI

Free, Pro and Enterprise. You can subscribe month-to-month or pay for a full year up-front for a 20% discount.

Free, 10 days Trial

Non commercial and commercial usage

$0 USD

Coming Soon

-

Scan for redundant code

Scan for cloned code

Scan for similar code

-

Test Selection

Test Strategy

Refactoring

Anomaly Detection

Risks Mitigations

SW Update Validation

Intelligent Conversation

WEB API

Free Support

Pro

Full Package – Non commercial and commercial usage

$12 USD

Coming Soon

-

Full transparency of redundant, duplicate and cloned segments

Scan for Redundant, Cloned, and Similar segments

Filtering per module, repos and Segments

Refactor creativity monitored and “relaxed”

Optimized for Embedded Systems

Cross-repos and Projects with a revert mechanism

Applying your own fix will update all similar codes at once

Apply changes, with one click to all segments

Address all warnings for every segment with a single click, in accordance with warnings from third-party tools

-

Test Selection

Test Strategy

Refactoring

Anomaly Detection

Risks Mitigations

SW Update Validation

Intelligent Conversation

WEB API

Free Support

Enterprise

Full package with experience in implementing standards

$100

USD / Month

$1250

USD / Year

Coming Soon

Coming Soon!

- Full package with experience in implementing standards

- Free Support

Our Investors

Frequently Asked Questions

- General

- Responsible AI

Why is continuous refactoring essential, not just an option?

Continuous refactoring is not just an option but an essential practice in software development. It fosters code maintainability, quality, readability, performance, and adaptability while reducing technical debt and improving testability. By investing time and effort in refactoring, development teams can build software that is more robust, flexible, and sustainable over time.

Why should refactoring and optimization be a part of every sprint?

Incorporating refactoring and optimization tasks into every sprint ensures that the development process remains iterative, adaptive, and focused on delivering high-quality software that meets both technical and business requirements. It helps the team build and maintain a sustainable pace of development while continuously improving the overall product.

When is refactoring absolutely necessary?

Refactoring is absolutely necessary in various scenarios to maintain code quality, improve performance, enhance readability, and prepare the codebase for future changes and growth. It is an essential practice in software development that helps ensure the long-term success and sustainability of the software product.

How does LOCI help prepare code for compliance early on?

LOCI helps prepare code for compliance early on by setting the refactoring tone and style according to your project SW coding and conventions.

Aurora Labs has developed a responsible AI solution featuring a Large Code Language Model, leveraging transformer-based architecture to work seamlessly with Software CI/CD artifacts. This cutting-edge technology ensures complete data privacy, keeping all artifacts secure and confined within the customer’s premises, with no risk of leaks or external sharing.

From NLP for SW lines of code to Large Code Language Model

2016-2018

NLP, LSTM and ART/ Fuzzy art, Models, statisticals, 3diff

2018-2021

Added: Autoencoders, GNG, distributed algorithms, Clustering

2021-2022

Added: Transformer Model Graph based algorithms

A Large Code Language Model (our LCLM) and a Large Language Model (LLM) are both types of AI models designed to understand and generate human-like text. However, there are key differences between them:

Domain Specialization

- Our LCLM: Specifically tailored for understanding and generating refactoring code. It is optimized to handle programming languages, Bin Files , code syntax, and software development tasks.

- LLM: Designed for a broader range of text-based tasks, including natural language understanding and generation. It handles general language tasks like translation, summarization, and conversation.

Training Data

- Our LCLM: Trained predominantly on BIN, Tracing, source code, documentation, and other programming-related texts. The focus is on repositories, CI/CD artifacts,coding platforms, and technical manuals.

- LLM: Trained on a vast and diverse set of text data, including books, articles, websites, and other general language sources.

Use Cases

- Our LCLM: Trained predominantly on BIN, Tracing, source code, documentation, and other programming-related texts. The focus is on repositories, CI/CD artifacts,coding platforms, and technical manuals.

- LLM: Trained on a vast and diverse set of text data, including books, articles, websites, and other general language sources.

Model Architecture and Features

- Our LCLM: Features tailored teokenizers, x1000 smaller and vocabularies designed to handle the syntax and structure of programming languages efficiently. It may include specialized pipelines for Bin files, code analysis and generation.

- LLM: Uses general-purpose architectures and tokenizers that are effective for a broad range of language tasks.